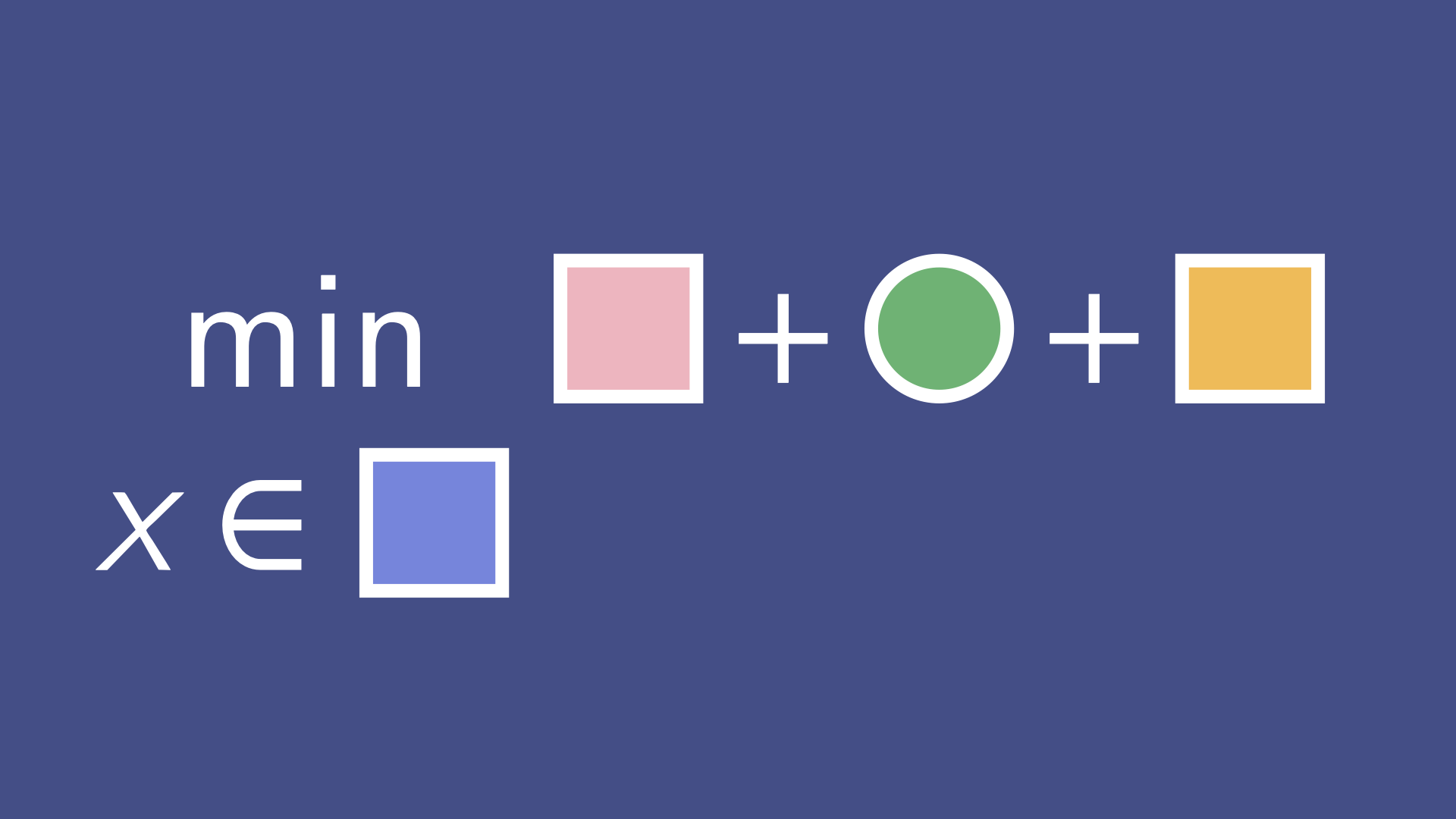

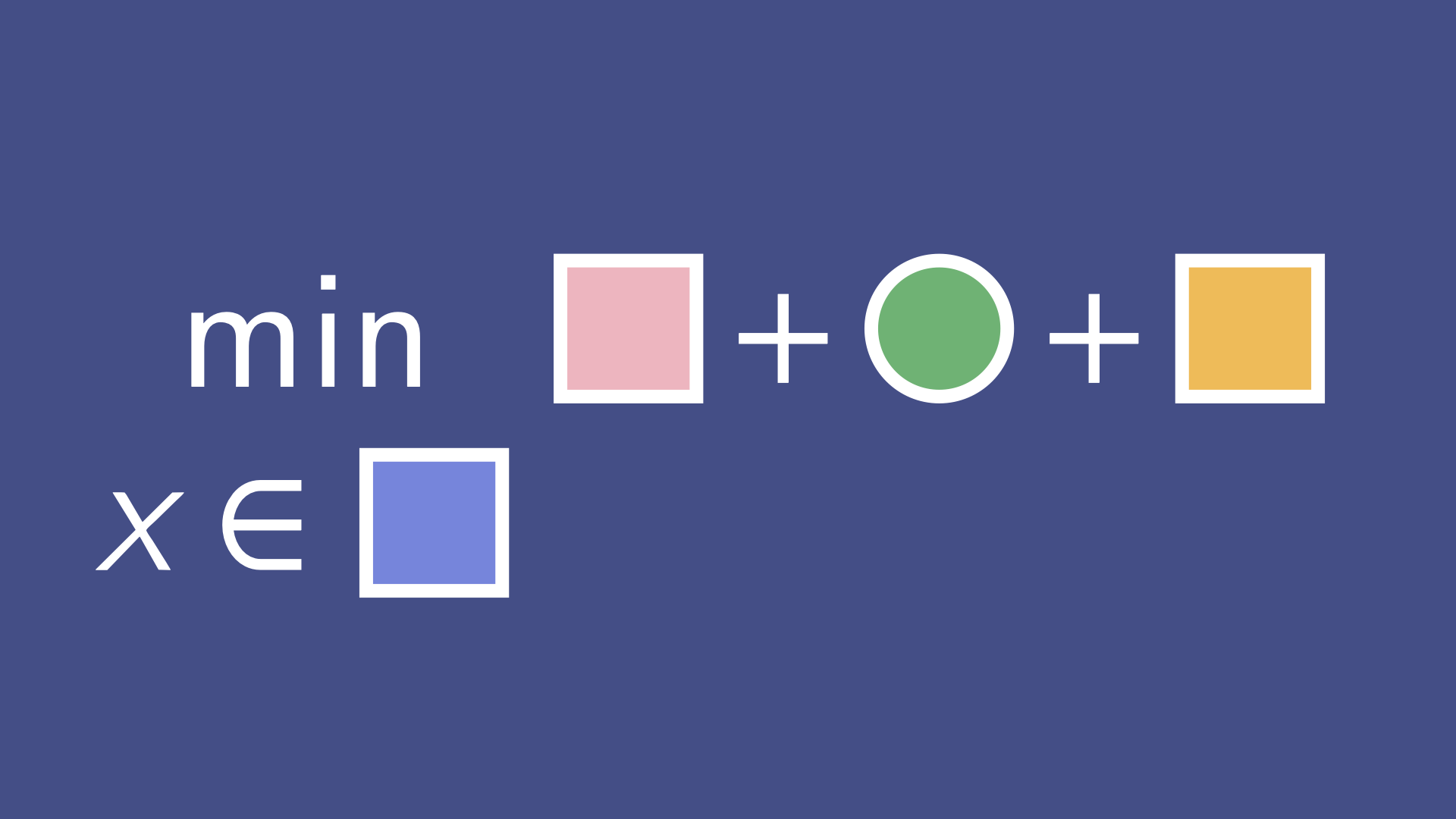

Problem Decomposition

Learn to break down optimization problems into recognizable parts, including smooth terms, nonsmooth penalties, and constraints, and map each one to a corresponding operator.

The Operator's Toolbox

The Operator's Toolbox teaches you how modern operator-splitting methods work under the hood so you can build solvers with structure and predictability. Learn to decompose problems, choose the right operator tools, and assemble algorithms with built-in guarantees, not guesswork.

Coming Soon

Learn to break down optimization problems into recognizable parts, including smooth terms, nonsmooth penalties, and constraints, and map each one to a corresponding operator.

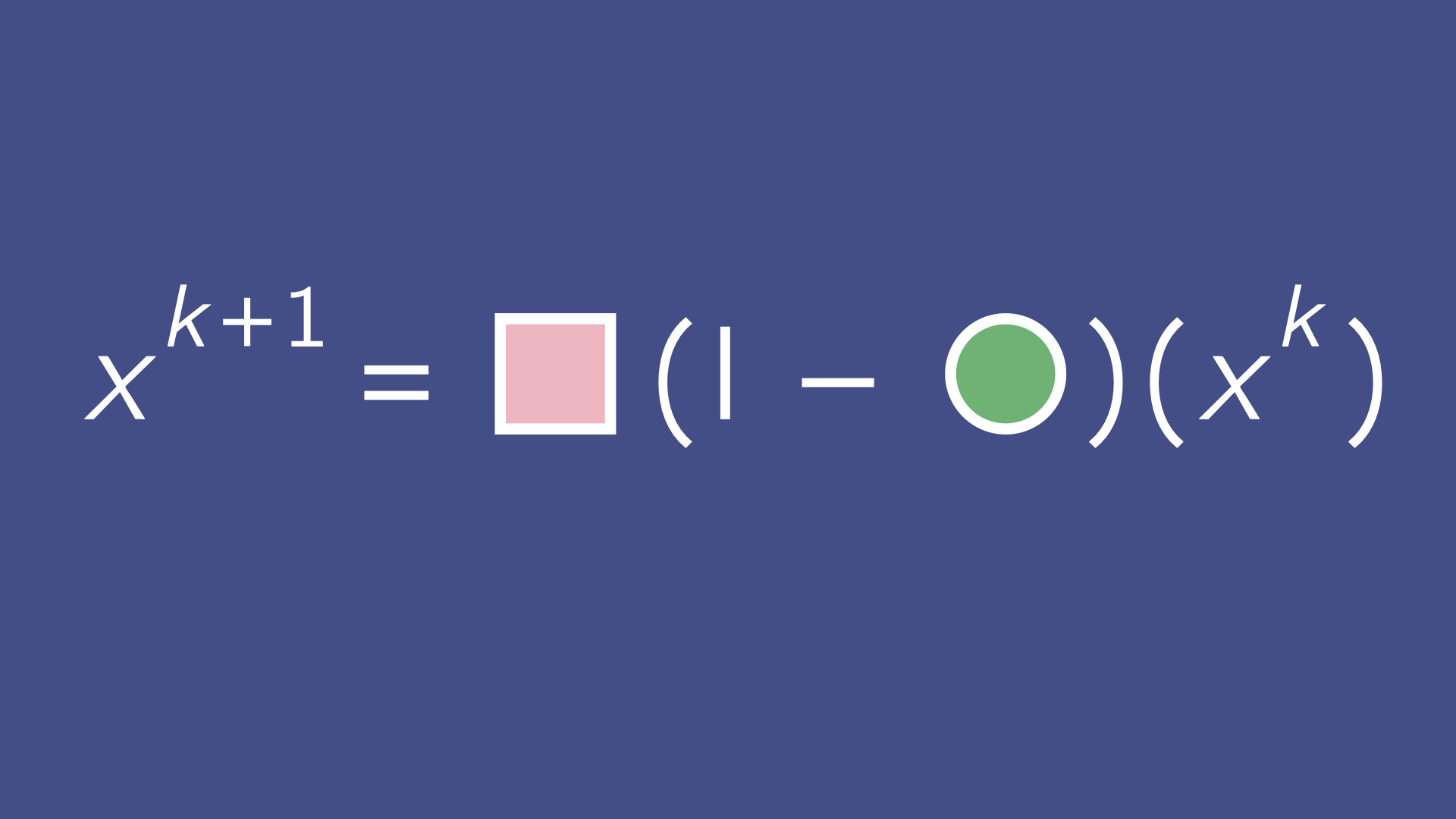

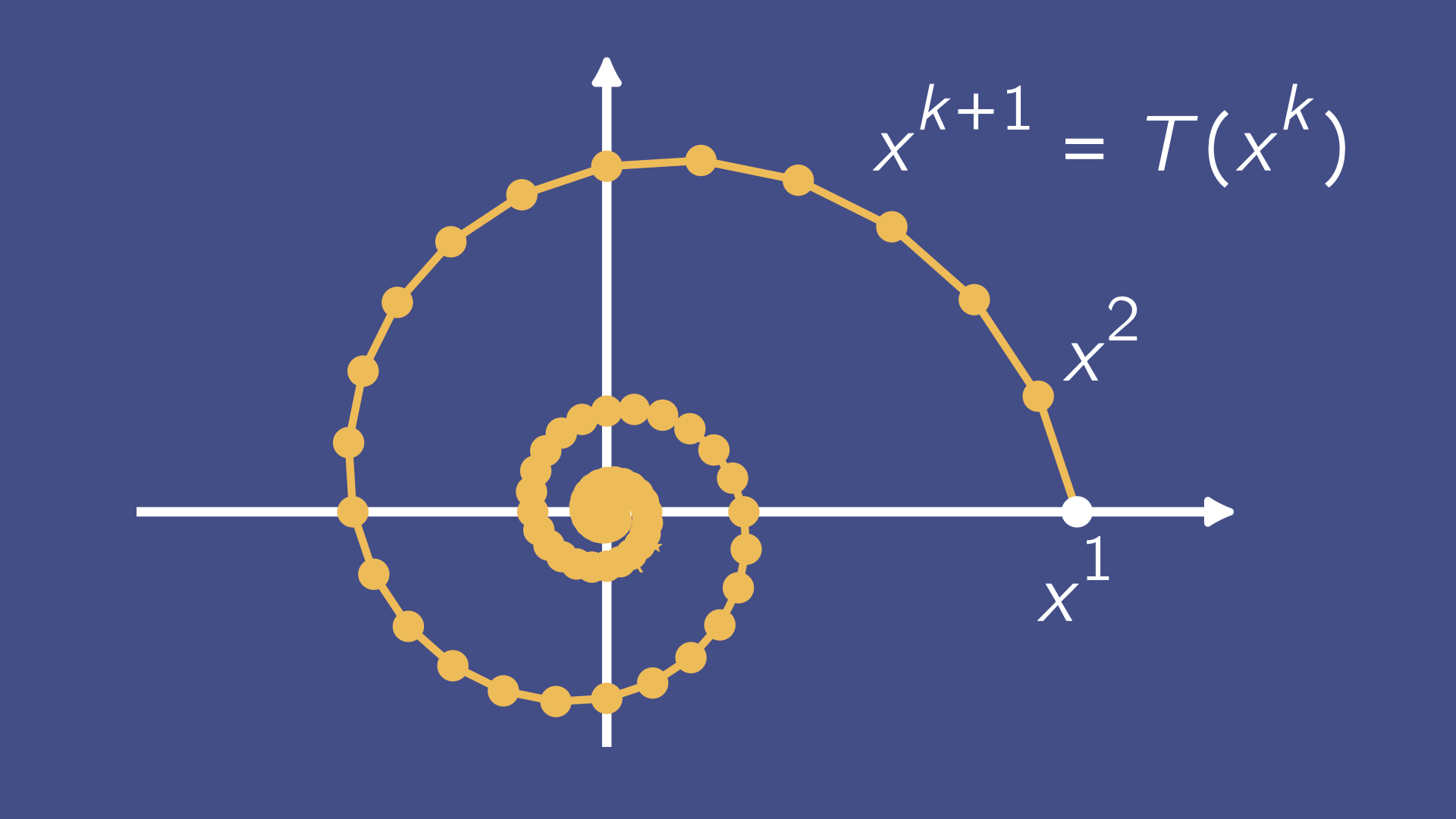

Learn how to combine operators into convergence-guaranteed algorithms using splitting methods. Understand what makes the pieces fit both formally and computationally.

Understand how properties like monotonicity, cocoercivity, and nonexpansiveness drive convergence so you can debug, tweak, and adapt algorithms with confidence.

Inside the Toolbox.

The Operator's Toolbox is a structured video course with 14 hours of self-paced video content. Lectures include clear explanations with illustrations and downloadable examples and problems so you can apply what you learn right away. You'll get lifetime access and a certificate of completion for continuing education reimbursement.

Howard Heaton has degrees in computer science, mathematics, and physics. His masters and PhD were obtained at UCLA, where he studied optimization under Stanley Osher and Wotao Yin. Today he works in tech building optimization algorithms for large-scale problems, and teaches mathematics and optimization through Typal Academy.

Howard's Personal SiteThis course is for engineers, researchers, and technically skilled practitioners who use convex optimization and want to understand how solvers actually work. It's ideal if you've used gradient descent, CVXPY, or PyTorch optimizers, work with constraints or regularizers, and need to build or adapt algorithms rather than call a black-box solver.

You'll learn how to decompose problems into modular components, build robust algorithms by combining operators, translate theory into reusable code, and use properties such as averagedness and cocoercivity to reason about stability.

The course combines intuitive diagrams with implementation-oriented explanations. It goes beyond basic gradient descent and covers practical splitting methods including Forward-Backward, Douglas-Rachford, ADMM-style thinking, and related operator frameworks.

Comfort with linear algebra, multivariable calculus, and undergraduate analysis is recommended. The course targets mathematically mature learners who want rigorous, practical understanding.

Yes. You receive a completion certificate and receipt suitable for employer reimbursement, plus optional supporting documentation upon request.

Release timing is planned for 2026 and depends on pre-order interest. Enrolling early helps prioritize launch readiness.

No. The course focuses on continuous optimization with first-order and monotone-operator methods, not integer programming methods such as branch-and-bound or cutting planes.

Start with The Operator's Toolbox and get structured guidance from foundations to modern splitting methods.